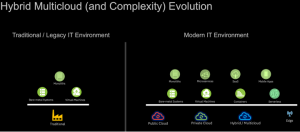

Infographic: How EDI has Impacted Different Industries

Our friends over at A3logics, a software and app development company based in the USA, recently created an infographic on the topic of “How EDI has impacted different industries.” EDI is trending and is expecting significant future growth.